Table of Contents

ToggleIntroduction

Artificial Intelligence (AI) has gained significant prominence in recent years, transforming industries and augmenting human capabilities across various domains. With its expanding influence, it becomes essential to familiarize ourselves with the vast array of AI-related terms. This comprehensive AI glossary serves as a valuable resource for individuals seeking to navigate the complex world of AI, providing clear and concise definitions for 3000 terms in the field.

AI Glossary

AI refers to the simulation of human intelligence in machines, empowering them to perform jobs that would otherwise commonly require human intelligence. This includes problem-solving, learning, reasoning, perception, and language understanding.

Machine Learning (ML)

ML is a subset of computer based intelligence that spotlights on empowering machines to gain from information and work on their performance without being unequivocally modified. It involves algorithms that allow systems to automatically analyze and interpret data.

Supervised Learning

Supervised learning is a type of machine learning where the algorithm is trained on labeled data, with known input-output pairs. The algorithm learns to make predictions or decisions based on the provided training examples.

Unsupervised Learning

Unsupervised learning is a type of machine learning where the algorithm is trained on unlabeled data. The algorithm discovers patterns and structures in the data without explicit guidance.

Reinforcement Learning

Reinforcement learning is a type of machine learning where an agent learns to interact with an environment to maximize rewards or minimize penalties. The agent receives feedback in the form of rewards or punishments based on its actions.

Deep Learning

Deep learning is a subfield of machine learning that focuses on neural networks with multiple layers. It enables machines to learn hierarchical representations of data and has achieved remarkable success in various applications, such as image and speech recognition.

Neural Networks

Neural networks are computational models inspired by the structure and function of the human brain. They consist of interconnected nodes, or artificial neurons, that process and transmit information.

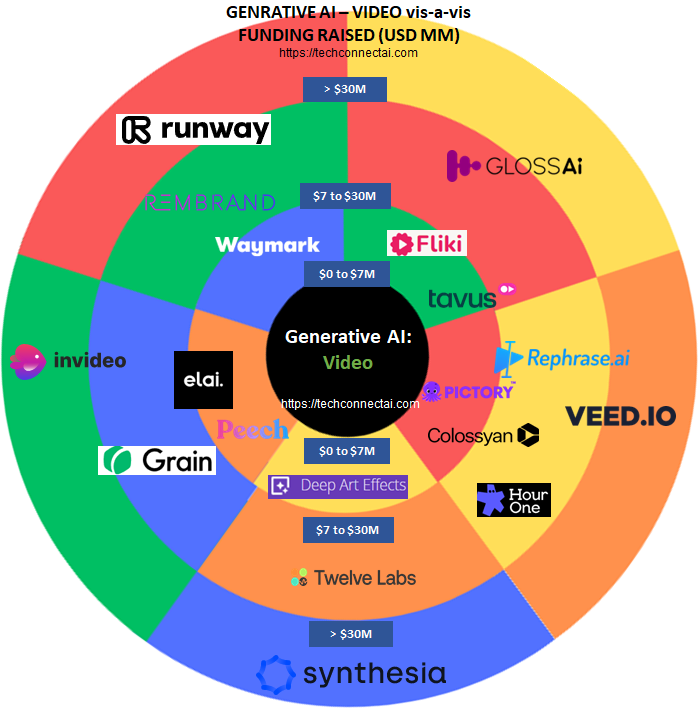

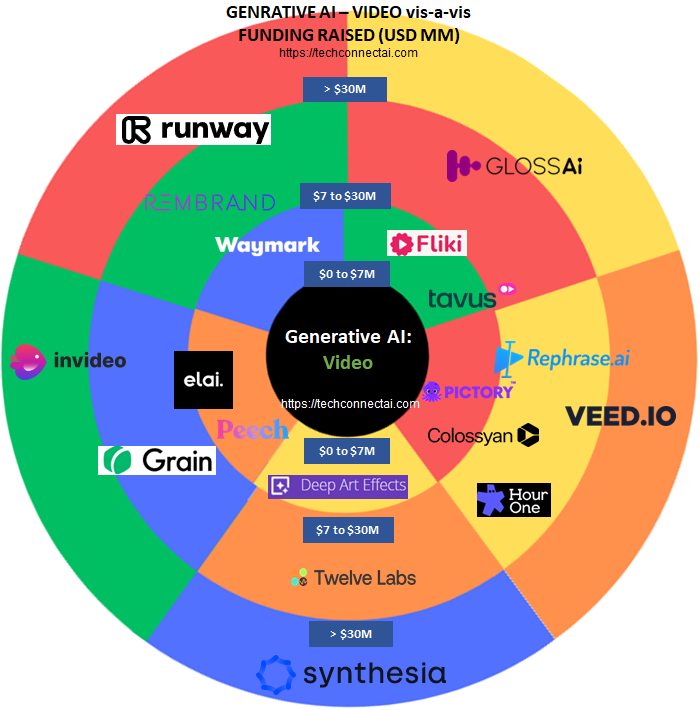

Are you interested in reading more about our analysis of the Top 10 Video generators? Read our article 10 Best AI Video Generators: Transforming Content Creation in the Digital Age

Are you interested in reading more about our analysis of the best AI Audio generator? Read our article 10 Best AI Audio Generator Tools to Revolutionize Content Creation!

Natural Language Processing (NLP)

Natural Language Processing (NLP) involves the interaction between computers and human language. It focuses on enabling machines to understand, interpret, and generate human language.

Sentiment Analysis

Sentiment analysis is a technique used to determine the sentiment or emotion expressed in a piece of text. It is commonly used to analyze social media posts, customer reviews, and feedback.

Named Entity Recognition (NER)

Named Entity Recognition (NER) is a process of identifying and classifying named entities, such as names of people, organizations, locations, and dates, in text data. It is essential for tasks like information extraction and text summarization.

Text Classification

Text classification involves assigning predefined categories or labels to text documents based on their content. It is widely used in tasks such as spam detection, sentiment analysis, and topic classification.

Computer Vision

Computer vision focuses on enabling machines to interpret and understand visual information from images or videos. It involves tasks such as object detection, image recognition, and image segmentation

Object Detection

Object detection is the task of identifying and localizing objects of interest within an image or video. It is widely used in applications like autonomous vehicles, surveillance systems, and object recognition.

Image Recognition

Image recognition involves training machines to recognize and classify objects or patterns within images. It has applications in various fields, including medical imaging, biometrics, and quality control.

Image Segmentation

Image segmentation is the process of dividing an image into multiple segments or regions to facilitate understanding and analysis. It is useful in applications such as object extraction, image editing, and medical imaging.

Are you interested in reading more about our analysis of the Top 10 AI Text generators? Read our article 10 Best AI Text Generator You Need to Try Today! Including Top Chat and Translation tools.

Are you interested in reading more about our analysis of the Top 10 AI Image generators? Read our article 10 Best AI Image Generators, From Pixels to Masterpieces!.

Robotics

Robotics combines AI, machine learning, and engineering to design and develop intelligent robots capable of performing tasks autonomously. It encompasses various areas such as industrial automation, healthcare, and exploration.

Autonomous Robots

Autonomous robots are robots capable of performing tasks without human intervention. They rely on AI algorithms and sensors to perceive their environment and make decisions

Humanoid Robots

Humanoid robots are robots designed to resemble and interact with humans. They often incorporate AI technologies to mimic human behaviors and perform tasks that require human-like dexterity.

Computer Vision in Robotics

Computer vision plays a vital role in robotics by enabling robots to perceive and understand visual information from their surroundings. It helps robots navigate, recognize objects, and interact with the environment.

AI in Industrial Automation

AI in industrial automation involves the use of AI technologies to enhance efficiency, productivity, and safety in manufacturing and industrial processes. It includes tasks like predictive maintenance, quality control, and autonomous assembly

Data Science

Data science is an interdisciplinary field that combines scientific methods, statistics, and AI techniques to extract insights and knowledge from data. It involves tasks such as data analysis, data visualization, and predictive modeling.

Data Analysis

Data analysis involves the process of inspecting, cleaning, transforming, and modeling data to discover useful information, draw conclusions, and support decision-making.

Data Preprocessing

Data preprocessing involves preparing and cleaning raw data to ensure its suitability for analysis. It includes tasks such as data cleaning, missing value imputation, and feature scaling.

Data Visualization

Data visualization refers to the representation of data in graphical or visual formats. It helps to communicate complex patterns, trends, and relationships in data more effectively.

Feature Engineering

Feature engineering is the process of creating new features or transforming existing features to enhance the performance of machine learning models. It aims to capture relevant information and patterns in the data.

Model Evaluation

Model evaluation assesses the performance and effectiveness of machine learning models. It involves metrics such as accuracy, precision, recall, and F1-score to measure the model’s predictive capabilities.

Internet of Things (IoT)

The Internet of Things (IoT) refers to the network of interconnected physical devices, vehicles, appliances, and other objects embedded with sensors, software, and connectivity. It enables them to collect and exchange data.

Smart Devices

Smart devices are physical objects embedded with sensors, processors, and connectivity capabilities. They can communicate with other devices and perform tasks autonomously or in response to user commands.

Sensor Networks

Sensor networks consist of interconnected sensors that collect and transmit data from the physical environment. They play a crucial role in enabling IoT devices to sense and interact with their surroundings.

Data Integration

Data integration involves combining data from multiple sources and systems to create a unified and comprehensive view. In the context of IoT, it ensures seamless data flow and interoperability between different devices and platforms.

Edge Computing

Edge computing is a distributed computing paradigm that brings computational capabilities closer to the data source or edge devices. It reduces latency, optimizes network bandwidth, and enhances real-time processing in IoT applications.

Big Data

Big data refers to extremely large and complex datasets that cannot be easily managed, processed, or analyzed using traditional methods. It encompasses the four V’s: volume, velocity, variety, and veracity.

Data Volume

Data volume refers to the vast amount of data generated and collected from various sources. It poses challenges in terms of storage, processing, and analysis.

Data Velocity

Data velocity refers to the speed at which data is generated, captured, and processed. It emphasizes real-time or near real-time data streams and requires efficient processing mechanisms.

Data Variety

Data variety refers to the diverse formats, types, and structures of data, including structured, unstructured, and semi-structured data. It requires flexible and adaptable techniques for analysis

Data Veracity

Data veracity refers to the accuracy, reliability, and trustworthiness of data. In big data environments, data quality and integrity are crucial considerations for making informed decisions.

Cloud Computing

Cloud computing refers to the delivery of computing services, including servers, storage, databases, software, and analytics, over the internet. It provides on-demand access to shared resources and scalable computing power.

Infrastructure as a Service (IaaS)

Infrastructure as a Service (IaaS) is a cloud computing model that provides virtualized computing resources over the internet. It allows users to rent virtual machines, storage, and networking infrastructure.

Platform as a Service (PaaS)

Platform as a Service (PaaS) is a cloud computing model that provides a platform and environment for developing, testing, and deploying applications. It eliminates the need for infrastructure management and focuses on application development.

Software as a Service (SaaS)

Software as a Service (SaaS) is a cloud computing model that delivers software applications over the internet. Users can access and use software applications without the need for installation or local infrastructure.

Virtualization

Virtualization is a technology that enables the creation of virtual resources, such as virtual machines or virtual networks, from physical resources. It allows for efficient utilization of computing resources and facilitates scalability.

Algorithm

An algorithm is a step-by-step procedure or set of rules designed to solve a specific problem or perform a specific task. In the context of AI, algorithms are used to train models, make predictions, and process data.

Genetic Algorithms

Genetic algorithms are optimization algorithms inspired by the process of natural selection and genetics. They iteratively evolve a population of potential solutions to find the best solution to a given problem.

Bayesian Networks

Bayesian networks, also known as belief networks or probabilistic graphical models, are graphical representations of probabilistic relationships among variables. They use Bayesian inference to make predictions or decisions.

Support Vector Machines (SVM)

Support Vector Machines (SVM) are supervised learning models used for classification and regression tasks. They find an optimal hyperplane that separates data points into different classes or predicts continuous values.

Neural Networks

Neural networks are computational models inspired by the structure and functioning of the human brain. They consist of interconnected artificial neurons that process and transmit information.

Perceptron

A perceptron is the simplest form of a neural network, consisting of a single artificial neuron. It takes multiple inputs, applies weights and a bias, and produces an output based on an activation function.

Convolutional Neural Networks (CNN)

Convolutional Neural Networks (CNN) are specialized neural networks designed for processing grid-like data, such as images or time series. They utilize convolutional layers to extract spatial and temporal patterns.

Recurrent Neural Networks (RNN)

Recurrent Neural Networks (RNN) are neural networks designed to process sequential or time-series data. They have recurrent connections that allow information to persist over time, enabling them to capture temporal dependencies.

Long Short-Term Memory (LSTM)

Long Short-Term Memory (LSTM) is a type of RNN architecture that addresses the vanishing gradient problem and can learn long-term dependencies. It is commonly used in tasks involving sequential data, such as natural language processing.

FAQs (Frequently Asked Questions)

1. What is the difference between artificial intelligence and machine learning?

Artificial intelligence is a broad field that encompasses the development of intelligent systems capable of performing tasks that typically require human intelligence. Machine learning, on the other hand, is a subset of AI that focuses on enabling machines to learn from data and improve their performance without being explicitly programmed.

2. How does deep learning differ from other machine learning techniques?

Deep learning is a subfield of machine learning that utilizes neural networks with multiple layers. It enables machines to learn hierarchical representations of data, which has proven to be highly effective in tasks such as image and speech recognition.

3. What is the role of data in AI and machine learning?

Data plays a critical role in AI and machine learning. It serves as the fuel for training and improving machine learning models. The quality, quantity, and diversity of data have a significant impact on the accuracy and performance of AI systems.

4. How does computer vision contribute to AI?

Computer vision enables machines to interpret and understand visual information from images or videos. It plays a crucial role in applications such as object detection, image recognition, and autonomous vehicles.

5. What are some ethical considerations in AI?

Ethical considerations in AI include issues such as bias in algorithms, privacy concerns, and the impact of AI on employment. It is important to develop AI systems that are fair, transparent, and aligned with ethical principles to ensure responsible and beneficial use of AI technologies.

Conclusion

This AI glossary provides an overview of key terms and concepts related to AI. From foundational concepts like artificial intelligence and machine learning to specific domains like natural language processing, computer vision, robotics, and data science, these terms cover the breadth of AI’s applications and techniques. Understanding these terms is essential for anyone interested in AI and its impact on various industries and society as a whole.

Disclaimer: The above AI glossary is a compilation of formal definitions as available online via various sources.